Building Scalable Data Lakehouses

Building Scalable Data Lakehouses: Unlocking the Power of Databricks and AWS

Introduction:

In the dynamic field of data management, the data lakehouse concept is revolutionizing how organizations manage their data. This blog post aims to help data professionals, enthusiasts, and learners grasp the practical implementation of scalable data lakehouses using advanced platforms like Databricks and AWS. By the end, you will have the insights to strengthen your data infrastructure and succeed in the data-driven environment.

Understanding the Data Lakehouse Paradigm:

1.1 Definition and Benefits:

A data lakehouse is an innovative approach to data management that blends the strengths of data lakes and data warehouses. It offers a unified solution that combines the flexibility and scalability of data lakes with the structured organization of data warehouses. Data lakehouses are gaining popularity for the following reasons:

- Scalability: Data lakehouses can efficiently handle massive amounts of data, making them ideal for organizations dealing with big data. They can easily scale to manage varying data volumes.

- Flexibility: Data lakehouses can accommodate diverse data types and use cases, from structured to semi-structured and unstructured data. This versatility caters to a wide range of business needs.

- Cost-Effectiveness: By optimizing storage and computing resources, data lakehouses provide a cost-efficient solution. They achieve this through efficient resource allocation and streamlined data processing in a unified environment.

1.2 Databricks and AWS: A Dynamic Partnership:

The powerful alliance between Databricks and AWS forms the foundation of modern data lakehouse architecture.

- Databricks: Databricks, with its Unified Data Analytics Platform, offers a seamless and integrated environment for building and managing data lakehouses. It provides a single, cohesive platform for data processing, analytics, and machine learning, simplifying the entire data pipeline.

- AWS: Amazon Web Services (AWS) contributes a robust and scalable cloud infrastructure. With services like Amazon S3 for scalable storage, AWS Glue for seamless data integration, and Amazon Redshift for efficient data warehousing, AWS forms the backbone of a powerful data lakehouse infrastructure.

Databricks and AWS join forces to provide data professionals with a powerful ecosystem for building, managing, and analyzing data efficiently and effectively.

Exploring the Latest Advancements in Data Lakehouse Architecture:

2.1 Delta Lake: The Game-Changer:

Delta Lake is an open-source storage layer that has significantly transformed data lakehouses. It prioritizes reliability and performance, ensuring data integrity and consistency.

- ACID Transactions: Delta Lake guarantees data consistency through ACID (Atomicity, Consistency, Isolation, Durability) transactions. This ensures accurate data updates, even in complex and concurrent scenarios.

- Time Travel: A unique and innovative feature, Time Travel, enables data versioning and rollback. It allows data professionals to explore historical data, providing invaluable insights for analysis, troubleshooting, and decision-making.

2.2 Lakehouse Architecture Patterns:

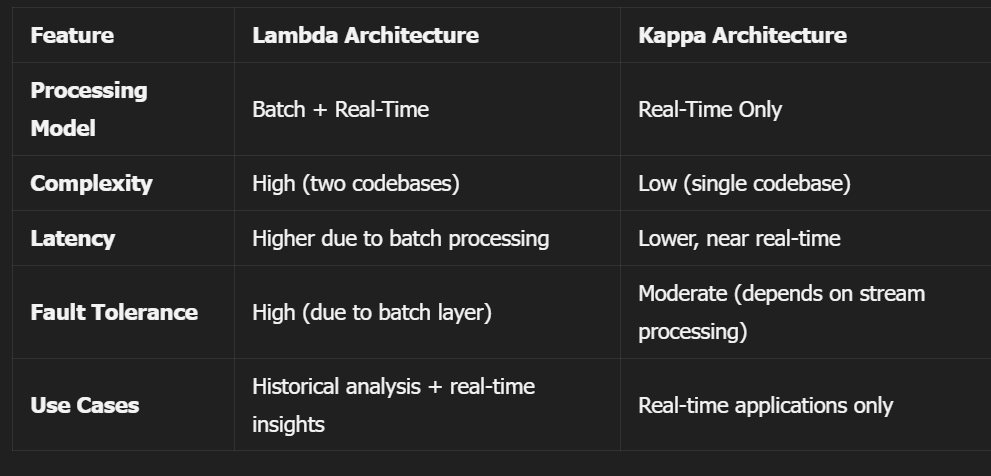

Lambda Architecture:

The Lambda Architecture merges batch and stream processing to provide real-time analytics. It processes data in parallel for low-latency, high-throughput processing, making it suitable for time-sensitive applications.

Kappa Architecture:

Kappa Architecture streamlines data processing through a unified stream-based approach, removing the need for batch processing. This results in more efficient and manageable data pipelines.

2.3 Databricks Lakehouse Platform Features:

Databricks provides a comprehensive suite of tools to enhance the data lakehouse experience:

- Delta Live Tables: These tools simplify data modeling and management, making it easier to define and manage data tables, ensuring a structured and organized data environment.

- Databricks SQL: A powerful analytics tool, enabling users to query data using SQL and integrate with popular BI tools like Tableau and Power BI for interactive and insightful data exploration.

- MLflow: Streamlines machine learning workflows, allowing data scientists to track experiments, manage and deploy models efficiently, and accelerate the ML lifecycle.

A Practical Guide to Building Scalable Lakehouses:

3.1 Target Audience and Their Interests:

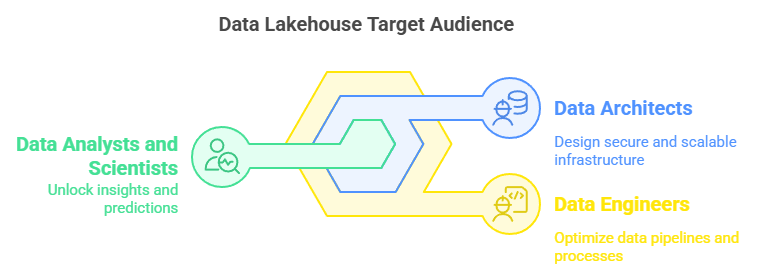

This guide caters to a diverse range of professionals, each with unique interests and objectives:

- Data Engineers: Focused on efficient data processing and management, they seek streamlined ETL processes and best practices in data engineering to optimize data pipelines.

- Data Architects: Responsible for designing secure and scalable data infrastructure, they aim to leverage cutting-edge technologies for optimal data architecture and performance.

- Data Analysts and Scientists: Driven by the need for advanced analytics and ML capabilities, they strive to unlock the power of data for insights, predictions, and informed decision-making.

3.2 Step-by-Step Guide:

Choose the Right Cloud Provider:

AWS stands out as a top choice for data lakehouses, offering a comprehensive suite of services. Its scalability, security features, and seamless integration capabilities make it an ideal partner for building robust data lakehouses.

Data Ingestion and Storage:

- Utilize AWS S3 for scalable and cost-effective storage. Its object storage architecture is well-suited for handling large-scale data volumes, ensuring efficient data management.

- Consider AWS Lake Formation for secure and managed data ingestion. It provides a secure service for data ingestion and cataloging, simplifying the data intake process.

Data Processing and Transformation:

- Leverage Databricks for powerful ETL processes. Its advanced data engineering capabilities simplify data transformation and integration, ensuring data consistency.

- Use Databricks’ Delta Live Tables for streamlined data modeling, making data management more accessible and efficient.

Data Analytics and Visualization:

- Databricks SQL is an interactive analytics tool that allows users to query data, create reports, and collaborate. It works seamlessly with popular BI tools for advanced visualization and dashboard creation to offer a complete data analysis solution.

- Integrating with Tableau or Power BI improves data visualization, enabling users to create informative dashboards and interactively explore data.

Machine Learning:

- Databricks provides an ML-optimized environment, ideal for data scientists.

- Utilize MLflow to manage the entire ML lifecycle, from experiment tracking to model deployment, ensuring a seamless machine learning workflow.

Security and Governance:

- Implement AWS Lake Formation for fine-grained data access control, ensuring data security and compliance with industry standards.

- Leverage AWS Identity and Access Management (IAM) for secure user authentication and authorization, protecting data from unauthorized access.

Monitoring and Optimization:

- Use Databricks and AWS monitoring tools to track performance and identify potential bottlenecks.

- Optimize data pipelines and infrastructure based on insights gained from monitoring, ensuring a high-performing data lakehouse.

Conclusion:

Establishing scalable data lakehouses on Databricks and AWS is a strategic move for organizations looking to maximize their data utilization. This guide helps data professionals build a reliable, adaptable, and cost-effective data infrastructure, keeping them competitive in the data-driven landscape.

It’s crucial to acknowledge the strength of the data lakehouse model in today’s data-focused environment. By harnessing the collaboration between Databricks and AWS, organizations can uncover new opportunities, extract valuable insights, and gain a competitive edge. Stay inquisitive, stay informed, and keep tapping into the extensive capabilities of data lakehouses!

References:

This article draws valuable insights from various sources, including official documentation, research papers, and industry reports. Here are the key references:

- Databricks. (2025). Databricks Documentation. Databricks, Inc.

- Amazon Web Services. (2025). AWS Documentation. Amazon Web Services, Inc.

- Delta Lake Project. (2025). Delta Lake Overview. Linux Foundation.

- Databricks. (2025). MLflow Documentation. MLflow Open Source Project.

- Kreps, J. (2014). Lambda and Kappa Architecture: Rethinking Big Data Processing Architectures. O’Reilly Media.

- Armbrust, M., Ghodsi, A., Zaharia, M., & Databricks. (2021). Lakehouse: A New Generation of Open Platforms that Unify Data Warehousing and Advanced Analytics. In Proceedings of the VLDB Endowment, 14(12), 3411-3424.

- AWS Lake Formation Team. (2025). AWS Lake Formation Documentation. Amazon Web Services.